A sad song about a loved one that the songwriter will never see again.

Tag: songs

Remastered “Synaptic Flies” from Odes to My Triceratops, Vol. 3

I’m working afternoons this week, so before I waste more hours of my limited life coordinating the replacement of printers (on top of my usual tasks) in the hospital complex where I’m employed, I’ve remastered another song. This one’s about walking around with flies in your head. Six down, twelve to go.

Remastered “The Last Wait” from Odes to My Triceratops, Vol. 3

Rainy Sunday afternoon, so between episodes of Mushoku Tensei, I’ve decided to remaster another one of my songs. This time the winner was “The Last Wait,” a somber electronic piece about the sudden realization that there ain’t no getting out of one’s hole.

Remastered “Burying the Beast” from Odes to My Triceratops, Vol. 3

Another day, another remastered song. This time I’m redoing “Burying the Beast,” in which William Griffin confesses a crime. Now that I know proper audio mastering techniques, I hope I’ve done justice to such an awesome song.

Remastered “Behind the Door” from Odes to My Triceratops, Vol. 3

As you should know if you’ve been checking out my stuff, I’ve learned how to master audio properly, messing around with frequency bands and such. That has allowed me to remaster a couple of songs from the third volume of Odes to My Triceratops, and this time I wanted to improve “Behind the Door,” that may be my favorite among the seventy-five or so songs I’ve produced. For those of you who have read my novella Motocross Legend, Love of My Life, this song is partially inspired by it. I think it’s quite the haunting piece about behind haunted by memories that will never come alive again.

I made this song before Udio, the AI service I use to produce music, improved its output quality, but hopefully it isn’t that noticeable in the remastered version. This is one you should check out wearing headphones.

Remastered “Fuck You, Life (cabaret version)” from Odes to My Triceratops, Vol. 3

Recently I opened up about my shameful troubles regarding audio mastering. Well, it seems I’ve hit my stride with what I learned by remastering “Go Away, Stay Away,” because I’ve pretty much nailed the last song of the album (I’m not remastering them in order), the grandiose, operatic “Fuck You, Life (cabaret version).”

I hope you enjoyed it. And if you didn’t, what’s wrong with you? Don’t you like good stuff?

Remastered “Go Away, Stay Away” from Odes to My Triceratops, Vol. 3

In the last post, I went on about my recent discovery of audio mastering techniques. It included my first remastered song whose band frequencies I had molested. Listening back, it was quite a mess. I decided that Audacity, instead of my abilities, was mainly responsible, so I acquired better audio editing software (namely iZotope RX, recommended by good ol’ castrated AI ChatGPT). Thanks to it, I have remastered the song “Go Away, Stay Away” into a version that I wouldn’t know how to improve anymore. Check it out.

I’d say it sounds quite polished. The trick this time was to pick a segment of the song as the “baseline” for frequency band manipulation, and from then on slightly altering the bands of other segments up and down, making sure that the leadings in and out of that segment didn’t clash with the change in frequencies.

Anyway, I’ve got seventeen goddamn other songs to master, and that’s just in this album. I should also return to writing my novella one of these days.

On audio mastering (and a remastered song)

As I was “remastering” the songs that make up the third volume of Odes to My Triceratops, I started thinking, “surely there’s fancier stuff to do to improve a finished song’s quality other than just messing around with its sound levels.” That ominous thought led me on a few days-long journey into the art of audio mastering. At one point, I opened one of my previous songs I thought finished, only to find out that the exporting process had clipped the hell out of it. I had no choice but to face that I had no fucking clue what I was doing.

Some reading later, along with help from ChatGPT, led me to the following steps to master a song:

- Normalize original WAV at -1db.

- Save original WAV as a 24-bit/192KHz WAV stereo file.

- Load exported WAV.

- High-pass filter at 30hz (roll off 24 db).

- Filter Curve EQ with preset (looked up good general values).

- Normalize at -1db.

- Apply multiband compression with the OTT plugin at 20% depth.

- Normalize at -1db.

- Split the stereo track and pan the channels to -70% and 70% respectively.

- Perform a thorough EQ check using the spectrum analyzer, adjusting frequencies along the way.

- Use the Limiter, Hard limit to -1 db to ensure the track doesn’t peak.

- Normalize at -1db.

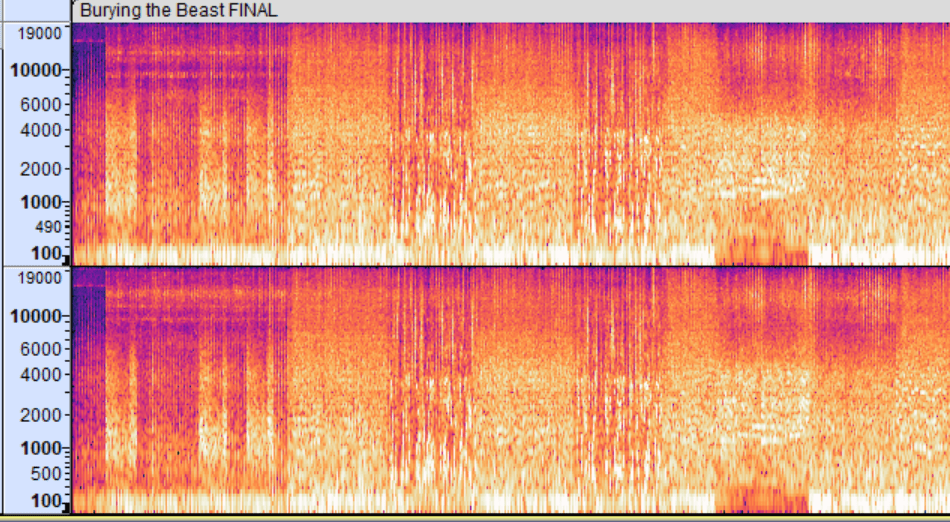

Until a few days ago, I thought a spectrogram was a medical procedure. I mean, check out this shit. Does it look like something that makes any sense?

Turns out that you can learn lots from it. The frequency bands of a song are divided into the following:

Sub-bass (20-60 Hz)

Role: Provides the deep, rumbling foundation. It’s felt more than heard.

Boost: To add depth and power, typically in electronic music or certain genres of pop and hip-hop.

Cut: If the mix sounds too muddy or overwhelming, especially in more acoustic or vocal-focused tracks.

Bass (60-250 Hz)

Role: Adds warmth and fullness. Key for the body of bass instruments and the kick drum.

Boost: To give more weight to bass instruments, kick drums, and overall warmth. If the bass feels weak, a slight boost around 60-100 Hz can add more punch.

Cut: To reduce muddiness and allow other elements to breathe.

Low Mids (250-500 Hz)

Role: Important for the body of most instruments, but can often introduce muddiness.

Boost: To add body and presence to guitars, vocals, and other midrange instruments.

Cut: To clear up muddiness and create space in the mix.

Midrange (500 Hz – 2 kHz)

Role: Critical for the presence of most instruments and vocals. This range is highly sensitive to human ears.

Boost: To enhance clarity and presence of vocals and lead instruments.

Cut: If the mix sounds too harsh or congested.

Upper Midrange (2 kHz – 6 kHz)

Role: Contributes to the clarity and definition of sounds, especially for vocal intelligibility and instrument attack.

Boost: To add attack and clarity, making vocals and instruments stand out.

Cut: To prevent harshness and ear fatigue.

Presence (6 kHz – 10 kHz)

Role: Adds brightness and detail, crucial for the sense of “air” and openness.

Boost: To enhance the crispness and detail of vocals and percussion.

Cut: To soften overly bright or piercing sounds.

Brilliance (10 kHz – 20 kHz)

Role: Provides the sheen and sparkle that make a mix sound open and airy.

Boost: To add shimmer and airiness, particularly to cymbals and hi-hats.

Cut: To avoid excessive sibilance and hiss.

At a glance with a spectrogram, if there’s too much heat at a frequency band, you likely need to lower it. If another band presents a significant void, you can boost it and bring to the forefront little details that weren’t even present before. It’s quite amazing. Unfortunately, my obsessive attention to detail kicked in; the first time I tried to remaster “Burying the Beast,” I intended to go through each segment of the song boosting and lowering frequency bands to reach the optimal mix, but soon enough I was driven nuts. My job is already destroying me, I don’t need to work that hard in my spare time. So I fixed broad issues instead, boosting or lowering frequencies where it made sense.

I present to you the remastered version of fan favorite (for this fan, at least) “Burying the Beast,” a song from my album Odes to My Triceratops:

It isn’t perfect by any means, but it’s much better than the previous version, so that works for me.

Tips on producing songs with Udio

Some months ago a revolutionary AI tool came out: Udio. It allows you to produce professional-sounding songs. Although I know how to play the guitar, I’ve always been, as a systems builder, more interested in putting songs together than learning how to play an instrument, and I also rarely enjoy interacting with people, so dealing with human musicians was out of the question. Udio has allowed me to come up with about seventy-five songs, so at this point I think I’m qualified to give tips on this subject.

I only start thinking about the musical side of things when I have the lyrics ready. They tend to change very little during production: mostly to make them sound better or rhyme, if the opportunity arises. I also add little touches like laughs, comments, and vocalizations like “aah,” “yeah,” and such, which tend to make the song sound more natural.

As far as I’m concerned, the lyrics don’t need to be elaborate. I mostly focus on sentences that transmit a particular emotion. I admire complex, very carefully-written lyrics like Joanna Newsom’s, but they wouldn’t work for the kind of songs I’ve wanted to make so far.

Once the lyrics seem ready, I pinpoint the stanza that will determine the general style of the entire song. It’s usually the chorus (I don’t write multi-chorus songs, so that’s easier to determine for me), or at least the part of the song that needs to be nailed to fit your mental image. Udio uses structural tags to help the AI determine your intention: [hook], [chorus], [verse], [bridge], and such. I don’t think I have ever started a song with a segment that wasn’t a [hook] or a [chorus].

Apart from structural tags, Udio’s AI was trained with loads of “mood” tags. I have collected as many as I could, which is an ongoing process, and I have relied on ChatGPT to classify them. For example, under “musical qualities” and “abstract” I have the following to choose from: “cryptic, complex, existential, dense, glitch, abstract, generative music, improvisation, mashup, eclectic, lobit, microtonal, minimalistic, sampling, silence, sparse, tone poem, uncommon time signatures”. All these tags are functional, and manipulate the generation in appropriate ways.

I go through all these mood tags and, using the same seed for the generations, I produce some to get a feel for what I’d like the final song to sound like. More often than not, I don’t know what general genre the song will fall in. I base my choices on what my subconscious likes; an “I’ll know it when I see it” situation.

Once I’ve determined the mood of that particular segment, I go through my collection of instrument clips that I have painstakingly amassed from YouTube videos. Some time ago, I read through online lists of all the instruments in the world, then I determined which had matching tags in Udio. While pre-producing a song, I listen to each of those instruments one by one and let my subconscious decide if it would fit any of the stanzas. It’s a very painstaking process that usually takes about two hours, but it pays off in the end: the songs I have come up with would have been far less interesting otherwise.

Once I’m happy with the distribution of instruments, I go through a massive collection of genres, plenty of them bizarre (like psychobilly and cowpunk, two of my newly-discovered favorites), and ask Udio to generate loads of clips. If the style of an initial generation impresses me, I tag its name with its genre. If any of the generations is good enough that I would have gladly produced a whole song out of it, I mark it as “[name of song], Pt. 1 candidate.” If I end up with more than one candidate, but I’d rather discard them all but one, I pick the best, then I remix it by adding on top of it other genres whose associated generations had impressed me. That’s how I ended up with a mix of dance punk, surf rock, and cajun in “Paleontology of Pain.”

The best source I’ve found to learn more about genres is the fantastic site musicmap.info. You can zoom in on every supergenre, figure out how most genres relate to others, and listen to songs in those genres.

Once I’ve determined the best seed generation, always 33 seconds-long, the real fun starts: I extend that segment in both directions to render the rest of the lyrics. I keep prompt strength at 70% (forcing Udio to mostly obey my prompt, but giving it some room for improvisation), lyrics strength at 35% (it sounds more natural, allowing the singer to repeat some words or hallucinate as Udio sees fit), and generation quality obviously at ultra.

The context length is extremely important: the AI will only rely on what you allow it to see when deciding how to style the new generation, so don’t include in the context a part of the song that you wouldn’t want to “tint” the extension you’re working on.

Along the way, you may love some generation except for a few seconds where the singer blurted out gibberish, some instrument could have sounded better, etc. That’s where inpainting comes in: it patches over those parts without altering the rest of the song. Note, though: inpainting in general sounds worse than full generations, particularly the drums. No idea if that’s something that the team behind Udio will be able to improve, so if you can trim the part of the song you would have inpainted and request a full generation instead, do that.

When I’m happy with the full song, I download its wav file and open it in Audacity. Udio often screws up the sound levels, so I mess with them in Audacity until I’m happy with how the entire song sounds. Sometimes I screw it up myself and have to “remasterize” them because I have inadvertently produced clicks, which was particularly noticeable in the version I uploaded of “Synaptic Flies.” Editing a song easily takes up to an hour, or an hour and a half.

That’s about it. You can check out my albums here. I have two of them ready, and in a few days I’ll upload the third volume of Odes to My Triceratops. I hope you have learned something from my obsessive attention to detail, in case you’re into this bizarre business of putting together AI-generated music. And if you read this far even though you weren’t interested, don’t you have better things to do with your time?

Song “Fuck You, Life (garage rock version)” from Odes to My Triceratops, Vol. 3

In case you don’t know, I’ve been obsessed with producing songs lately by exploiting the amazing AI service Udio. I’ve already made and released two full albums based on a strange story I wrote back in 2021, named Odes to My Triceratops. It follows the adventures and misadventures of a trio of friends who live in a town lost in the map. The main dude is a songwriter named William Griffin, who’s passionate and sensitive, if a bit unhinged. Another character is William’s next-door neighbor Claire Javernick, a blind redhead. Then we have Lorenzo, who’s a sentient triceratops for no justifiable reason. You can download the first two albums of this story through this link.

The last song of the third volume of Odes to My Triceratops (out of four) works for me great both as a cabaret/vaudeville song, and as a garage rock song. This evening I present to you the garage rock version. Very cool tune, nice to let it play and have a good time.

Same lyrics as the cabaret version:

Lorenzo was a triceratops

With a portal to hell inside his throat.

Claire was my next-door neighbor,

As well as the love of my life.

She didn’t want to love me anymore,

Even though I did the best I could.

At this point, the only thing worth doing

Is saying, “Fuck you, life,” and turning away.

Fuck music and poetry!

Fuck the people you loved and trusted!

Fuck this pointless earthly world

And the stars in the heavens!

When I close my eyes,

I see the end:

A red curtain drops

On a blackened stage,

And the universe applauds

At my grand finale!

Anyway, what’s next? First I was writing a novel for about three years, then it went on hiatus so I could work on a novella, then I barely progressed on that novella as I started producing a fuckton of songs. There’s still the last stretch of William Griffin’s story to discover, but I will park that for now until I get through what remains of Motocross Legend, Love of My Life. I intended to edit those parts at work, but I’ve been beyond busy this past couple of months, burdened with the task of replacing hundreds of printers at the hospital complex where I’m employed.

At some point of this marathon of songs, my “readership” apparently gave up. Oh, well. I’ll continue doing what I’ve always done: exactly what my subconscious demands, as obsessively as necessary.

You must be logged in to post a comment.