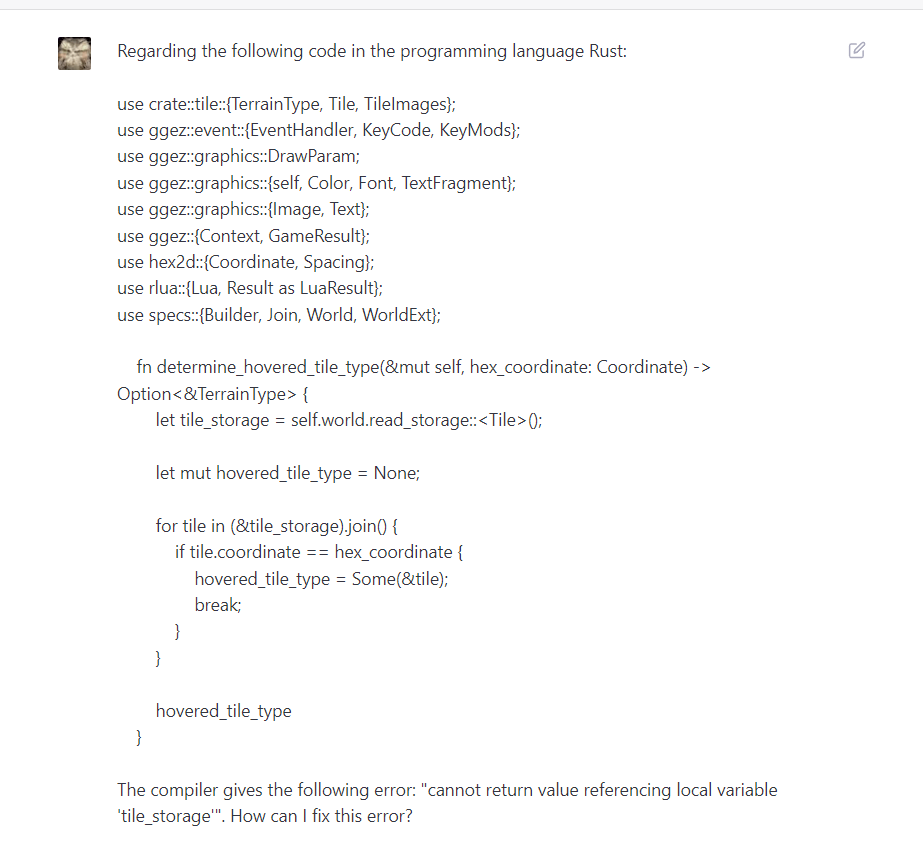

I had finished programming the non-visual part of Team Struggles (a part of the encounter system that involves character traits and psychological dimensions against some performance thresholds) when I faced the fact that the game was loading too damn slow. I admit, I have been a bit overeager demanding more anime photo IDs from Midjourney, and they are completely unoptimized, but still, I figured that this project could load much faster. So I figured the following solutions:

- Lazy loading. Instead of loading encounter, biome, and photo ID images at once, just the image path is registered. Right before I need to draw a certain image, I check if it has been loaded, and if it hasn’t, I load it. That makes it so that the many images that won’t be seen in a particular testing session won’t need to be loaded at all. This change alone has sped up game loading significantly.

- Multithreading. This project didn’t feature any multithreading up to this point, as it is a static, 2D strategy game, but the process of loading the various parts (most of them from TOML files) could use some multithreading. My previous experience with this subject involved trying to develop a Dwarf Fortress-like simulation in Python, only to realize that Python isn’t suited for multiprocessing, nor remotely big simulations at all, due to its garbage-collected nature and a core that is locked to a single thread. However, Rust has mature crates that make multiprocessing relatively simple.

I asked GPT-4 to give me an overview of multiprocessing in the Rust programming language. It suggested a combination of the “rayon” and “crossbeam-channel” crates. The process works like this:

let (sender, ecs_receiver) = crossbeam_channel::unbounded();

You declare a sender and a receiver. The sender part will put on a queue the work done from a different thread, and the receiver will remain on the main thread to try to figure out what it can extract from the queue. However, those threads don’t need to disconnect: they are open channels. I assume that you could have a dedicated thread pumping out pathfinding-related calculations back to the main thread.

Spawning a thread is as easy as the following:

std::thread::spawn(move || {

load_ecs_threaded(sender);

});

The “move” order, or whatever you would call it, is tricky. Any information at all that you are sending from the main thread changes its ownership, even if you clone it normally, so you need to use the “Arc” library to clone it in some special way. Not sure how expensive it is.

Anyway, “load_ecs_threaded(sender)” is in this case the function that will run in the spawned thread. The definition and contents are the following:

use crate::{

gui::image_impl::ImageImpl,

world::{create_world, ecs::ECS},

};

pub fn load_ecs_threaded(sender: crossbeam_channel::Sender<ECS<ImageImpl>>) {

sender

.send(create_world::<ImageImpl, ECS<ImageImpl>>())

.unwrap();

}

That function merely sends through the sender the results of the “create_world” function, that registers all necessary components with “specs” Entity-Component System.

You won’t be able to check if the spawned threads have done anything unless you are running some sort of loop on the main thread. In this case I’m running the game with the 2D game dev “ggez” crate, which operates a simple, but well-working, game loop. From there, you need to rely on the “receiver” part of the channel to try to receive data:

if let Ok(ecs) = self.ecs_receiver.try_recv() {

match self.shared_resources.try_lock() {

Ok(mut bound_shared_resources) => {

bound_shared_resources.set_ecs(ecs);

self.progress_text = Text::new(“Loaded Entity-Component System”.to_string());

}

Err(error) => return Err(GameError::CustomError(format!(“Couldn’t lock shared resources to set the world instance. Error: {}”,

error))

),

}

}

Through the call “ecs_receiver.try_recv()” I will get either an Ok or an Error. An error may just be that the channel is empty because the remote function hasn’t finished working, so we just check Ok. In that case, the thread has finished doing its job. We gather the results (the “ecs” in this case) and store it into our shared_resources as I did previously.

That’s all. You need to be careful, though, because there are some structs that you can’t send through channels. For example, you can’t send the graphical context of “ggez”, meaning that you always need to load images in the main thread. You also can’t send the random number generator through, as it’s explicitly working on a single thread. But I haven’t found any issue sending my game structs.

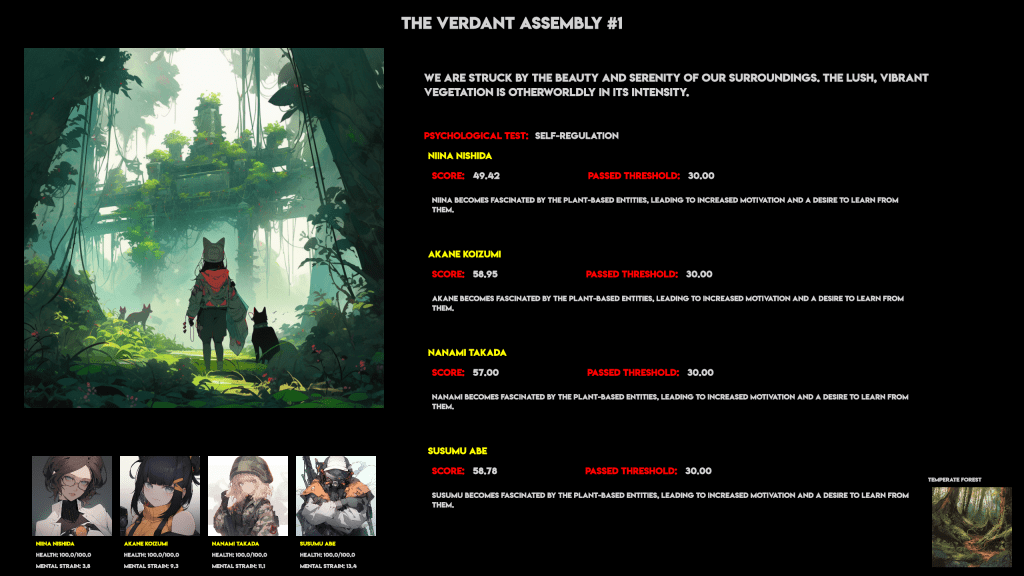

Now that the game doesn’t seem to freeze on launch, I can focus on implementing the visual aspect of Team Struggles.

You must be logged in to post a comment.