Read first the short story this post-mortem is about: That Feathered Bastard.

Through this cycle of fantasy stories, I’m exercising in tandem my two main passions in life: building systems and creating narratives. Every upcoming scenario, which turns into a short story, requires me to program new systems into my Living Narrative Engine, which is a browser-based platform for playing through immersive sims, RPGs and the likes. Long gone are the scenarios that solely required me to figure out how to move an actor from a location to another, or to pick up an item, or to read a book. Programming the systems so I could play through the chicken coop ambush involved about five days of constant work on the codebase. I’ve forgotten all that was necessary to add, but off the top of my head:

- A completely new system for non-deterministic actions. Previously, all actions succeeded, given that the code has a very robust system for action discoverability: unless the context for the action is right, no actor can execute them to begin with. I needed a way for an actor to see “I can hit this bird, but my chances are 55%. I may not want to do this.” Once you have non-deterministic actions in a scenario, it becomes unpredictable, with the actors constantly having to maneuver a changing state, which reveals their character more.

- I implemented numerous non-deterministic actions:

- Striking targets with blunt weapons, swinging at targets with slashing weapons, thrusting piercing weapons at targets. None of those ended up taking part of this scenario, because the actors considered that keeping the birds alive was a priority, as Aldous intended.

- Warding-related non-deterministic actions: drawing salt boundaries around corrupted targets (which Aldous said originally he was going to do, but the situation turned chaotic way too fast), and extracting spiritual corruption through an anchor, which Aldous did twice in the short.

- Beak attacks, only available to entities whose body graphs have beak parts (so not only chickens, but griffins, krakens, etc.). This got plenty of use.

- Throwing items at targets. Bertram relied on this one in a fury. I got clever with the code; the damage caused by a thrown weapon, when the damage type is not specified, is logarithmically determined by the item’s weight. So a pipe produces 1 unit of blunt damage, and throwing Vespera’s instrument case at birds (which I did plenty during testing) would cause significant damage. Fun fact: throwing an item could have produced a fumble (96-100 result on a 1-100 throw), and that would have hit a bystander. Humorous when throwing a pipe, not so much an axe.

- Restraining targets, as well as the chance for restrained targets to free themselves. Both of these got plenty of use.

- A corrupting gaze. It was attempted thrice, if I remember correctly, once by the main vector of corruption and the other by that creepy one with the crooked neck. If it had succeeded, it would have corrupted the human target, and Aldous would have had to extract it out of them as well. That could have been interesting, but I doubt it would have happened in the middle of chickens flying all over.

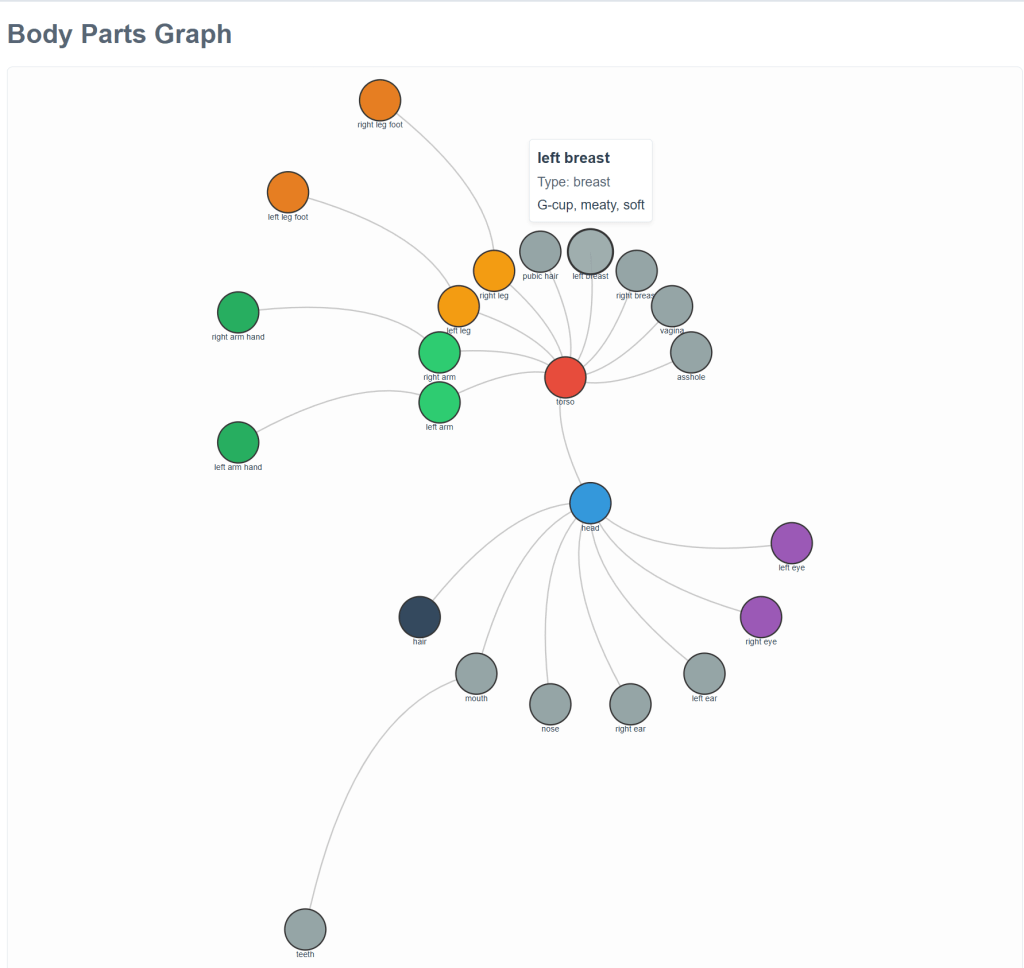

- Implementing actions that cause damage meant that I needed to implement two new systems: health and damage. Both would rely on the extensive anatomy system, which produces anatomy graphs out of recipes. What I mean about that is that we have recipes for roosters, hens, cat-girls, men, women. You specify in the recipe if you want strong legs, long hair, firm ass cheeks, and you end up with a literal graph of connected body parts. Noses, hands, vaginas exist as their own entities in this system. They can individually suffer damage. I could have gone insane with this, as Dwarf Fortress does, simulating even individual finger segments and non-vital internal organs. I may do something similar some day if I don’t have anything better to do.

- Health system: individual body parts have their own health levels. They can suffer different tiers of damage. They can bleed, be fractured, poisoned, burned, etc. At an overall health level of 10%, actors enter a dying state. Suffering critical damage on a vital organ can kill creatures outright. During testing there were situations in which a head was destroyed, but the brain was still functioning well enough, so no death.

- Damage system: weapons declare their own damage types and the status effects that could be applied. Vespera’s theatrical rapier can pierce but also slash, with specific amounts of damage. Rill’s practice stick only does low blunt damage, but can fracture.

Having a proper health and damage system, their initial versions anyway, revealed something troubling: simple non-armored combat with slashing weapons can slice off limbs and random body parts with realistic ease. Whenever I get to scenes involving more serious stakes than a bunch of chickens, stories are going to be terrifyingly unpredictable. Oh, and when body parts are dismembered, a corresponding body part entity gets spawned at the location. That means that any actor can pick up a detached limb and throw it at someone.

Why go through all this trouble, other than the fact that I enjoy doing it and that it distracts me from the ocean of despair that surrounds me and that I can only ignore when I’m absorbed in a passion of mine? Well, over the many years of producing stories, what ended up boring me was that I went into a scene knowing all that was going to happen. Of course, I didn’t know the specifics of every paragraph, and most of the joy went into the execution of those sentences. But often I found myself looking up at the sequences of scenes to come, and it was like erecting a building that you already knew how it was going to end up looking. You start to wonder why even bother, when you can see it clearly in your mind.

And I’m not talking about that “plotter vs. pantser” dichotomy. Pantsing means you don’t know where you’re going, and all pantser stories, as far as I recall, devolve into messes that can’t be tied down neatly by the end. And of course they’re not going to go back and revise them to the necessary extent of making something coherent out of them. As much as I respect Cormac McCarthy, one of his best if not the best written novel of his, Suttree, is that kind of mess, which turns the whole thing into an episodic affair. An extremely vivid one that left many compelling, some harrowing, images in my brain, but still.

I needed the structure, with chance for deviation, but I also needed to be constantly surprised by the execution of a scene. I wanted to go into it with a plan, only for the plan to fail to survive the contact with the enemy. That’s where my Living Narrative Engine comes in. Now, when I experience a scene, I don’t know what the conversations are going to entail. I didn’t even come up with Aldous myself: Copperplate brought him up in the first scene when making up the details of the chicken contract. It was like that whole “Lalo didn’t send you” from Breaking Bad, which ended up producing a whole series. From that mention of Aldous, after an iterative process of making the guy interesting for myself, he ended up becoming a potter-exorcist I can respect.

I went into that chicken coop not knowing anything about what was going to happen other than the plan the characters themselves had. Would they overpower the chickens and extract the corruption out of them methodically with little resistance? Would any of the extraction attempts succeed? Would any actor fly into a rage, wield their weapons and start chopping off chicken limbs while Aldous complained? Would any of the characters suffer a nasty wound like, let’s say, a beak to the eye? I didn’t know, and that made the process of producing this scene thrilling.

Also, Vespera constantly failing at everything she tried, including two rare fumbles that sent her to the straw, was pure chance. It made for a more compelling scene from her POV; at one point I considered making Aldous the POV, as he had very intriguing internal processes.

Well, the scene wasn’t all thrilling. You see, after the natural ending when that feathered bastard pecked Vespera’s ass, the scene originally extended for damn near three-fourths of the original length. People constantly losing chickens, the rooster pecking at anyone in sight, Melissa getting frustrated with others failing to hold down the chickens, Rill doing her best to re-capture the chickens that kept wrenching free from her hold. Aldous even failed bad at two extractions and had to pick up the vessel again. It was a battle of attrition, which realistically would have been in real life. I ended up quitting, because I got the point: after a long, grueling, undignified struggle, the chickens are saved, the entity is contained in the vessel, and the actors exit back to the warm morning with their heads down, not willing to speak for a good while about what they endured.

Did the scene work? I’m not sure. It turned out chaotic, with its biggest flaw maybe the repetition of attempting to catch chickens only for them to evade capture. There were more instances of this in the original draft, which I cut out. I could say that the scene was meant to feel chaotic and frustrating, and while that’s true, that’s also the excuse of those that say “You thought my story was bad? Ah, but it was meant to be bad, so I succeeded!” Through producing that scene, editing it, and rereading it, I did get the feeling of being there in that chaotic situation, trying to realistically accomplish a difficult task when the targets of the task didn’t want it completed, so if any reader has felt like that, I guess that’s a success.

I have no idea what anyone reading this short story must have felt or thought about it, but it’s there now, and I’ll soon move out to envision the next scenario.

Anyway, here are some portraits for the characters involved:

Aldous, the potter-exorcist

Kink-necked black pullet

Slate-blue bantam

White-faced buff hen

Large speckled hen

Copper-backed rooster

You must be logged in to post a comment.